Week9

Back to NS210b Class page

MANUSCRIPT ID

- Neuronal ensemble control of prosthetic devices by a human with tetraplegia

- Leigh R. Hochberg, Mijail D. Serruya, Gerhard M. Friehs, Jon A. Mukand, Maryam Saleh, Abraham H. Caplan, Almut Branner, David Chen, Richard D. Penn and John P. Donoghue, Nature 442, 164-171 (13 July 2006)

- PURPOSE: Neuromotor prostheses (NMPs) aim to replace or restore lost motor functions in paralysed humans by routeing movement-related signals from the brain, around damaged parts of the nervous system, to external effectors. To translate preclinical results from intact animals to a clinically useful NMP, movement signals must persist in cortex after spinal cord injury and be engaged by movement intent when sensory inputs and limb movement are long absent. Furthermore, NMPs would require that intention-driven neuronal activity be converted into a control signal that enables useful tasks. Here we show initial results for a tetraplegic human (MN) using a pilot NMP. Neuronal ensemble activity recorded through a 96-microelectrode array implanted in primary motor cortex demonstrated that intended hand motion modulates cortical spiking patterns three years after spinal cord injury. Decoders were created, providing a 'neural cursor' with which MN opened simulated e-mail and operated devices such as a television, even while conversing. Furthermore, MN used neural control to open and close a prosthetic hand, and perform rudimentary actions with a multi-jointed robotic arm. These early results suggest that NMPs based upon intracortical neuronal ensemble spiking activity could provide a valuable new neurotechnology to restore independence for humans with paralysis.

- Neuromotor Prostheses • Tetraplegia • Neuronal ensembles • Control Signal • Linear Filter

MANUSCRIPT Details

- Introduction/Aims

- Previous work: Current assistive technologies rely on devices for which an extant function provides a signal that substitutes for missing actions. For example, cameras can monitor eye movements that can be used to point a computer cursor. Although these surrogate devices have been available for some time, they are typically limited in utility, cumbersome to maintain, and disruptive of natural actions. For instance, gaze towards objects of interest disrupts eye-based control. By contrast, an NMP is a type of brain–computer interface (BCI) that can guide movement by harnessing the existing neural substrate for that action—that is, neuronal activity patterns in motor areas.

For this technology to be successful, there are two basic requirements:

-There must be persistent activity in the relevant brain areas (in this case M1), despite injury to spinal cord. -This activity must be under the subject's voluntary control (this allows the construction of a control signal). -This activity must be movement and limb-specific.

- Methods

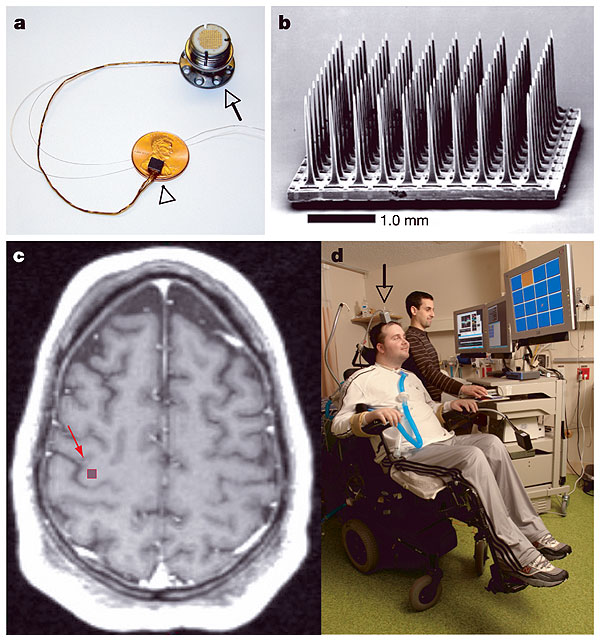

The bulk of the reported results come from studies of subject 1: HM, a 24-year old male who received a knife wound to the neck in 1999 which severed his spinal cord between C3-C4. He was implanted with a 3mmx3mm microelectrode array (10x10) in the arm-hand ¨knob¨ of M1 (fig.1).

a, The BrainGate sensor b, Scanning electron micrograph of the 100-electrode sensor, 96 of which are available for neural recording. c, Pre-operative axial T1-weighted MRI of the brain of participant 1. The arm/hand 'knob' of the right precentral gyrus (red arrow) corresponds to the approximate location of the sensor implant site. d, The first participant in the BrainGate trial (MN). The grey box (arrow) connected to the percutaneous pedestal contains amplifier and signal conditioning hardware; cabling brings the amplified neural signals to computers sitting beside the participant.

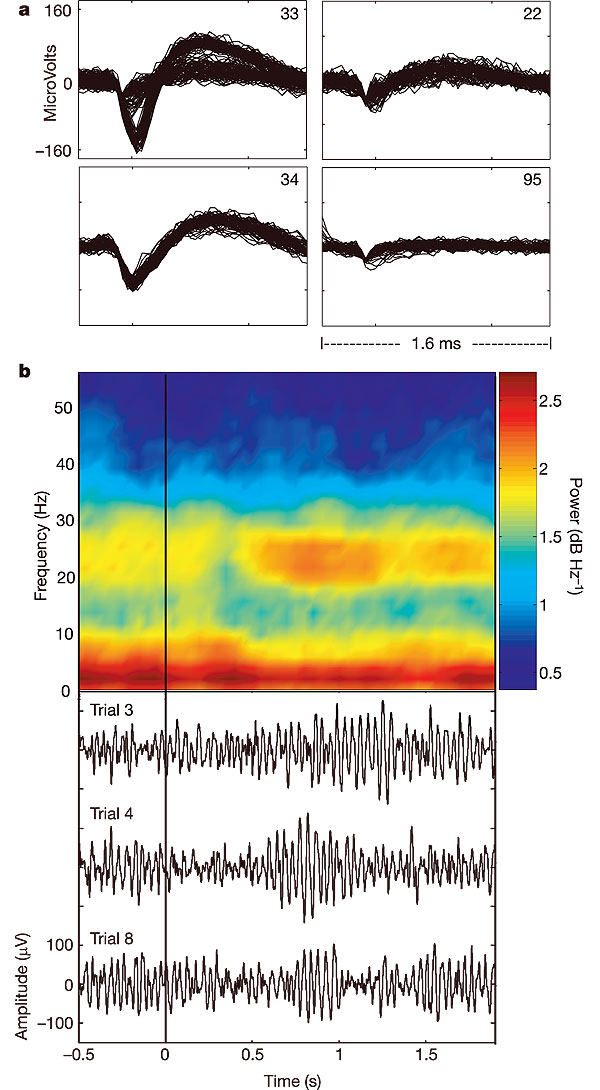

a, Discriminated neural activity (n = 80 superimposed action potentials for each unit). On electrode 33, two neuronal units could be reliably discriminated with peak to peak amplitudes of 206 and 56 microv, respectively. For electrode 34, a single unit is displayed. Electrode 22 illustrates a low-amplitude discriminated signal. Electrode 95 shows triggered noise. Data are from 90 days after array placement.

b, Local field potentials during neural cursor control. In the bottom panel, three traces of electrical recording (bandpass: 10–100 Hz) from one electrode are shown 0.5 s before and 1.9 s after the go cue instructing MN to move the cursor from the centre position to a target at the top of the screen. Power spectrograms were averaged across 20 trials to create the resulting pseudocolour power spectral density (PSD) plot. In the 20–30-Hz band, a decrease in power is seen approximately 300 ms after the go cue, followed by an increase in power from 550–1,200 ms after the go cue, which can also be appreciated in the raw, single trial data below.

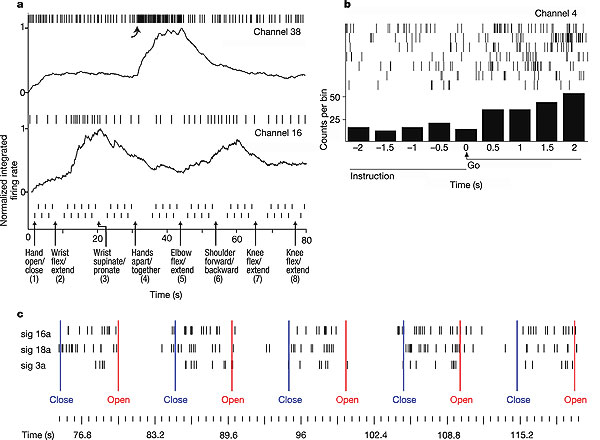

a, Over an 80-s period, MN was asked to imagine performing a series of left limb movements. Movement instruction time is indicated by a vertical arrow; the go cue for each alternating movement, presented as text on the video monitor, is indicated by a small vertical hash mark. Spiking activity of two simultaneously recorded units is displayed. Normalized, integrated firing rates (R) appear beneath each raster. Normalization is achieved by dividing by the maximum integrated firing rate from each unit's spike train over the time period displayed. All movements are imagined except for shoulder movement, which MN actually performed.

b, Go-cue-related activity modulation for a neuron recorded simultaneously with those in a. Each raster line is centred about the go cue, which requests that the patient imagine a movement; the seven raster lines represent the epochs surrounding each of the seven different movements in sequence a. The histogram displays the total number of spikes seen in each 500-ms bin. This neuron increased its firing rate during most imagined movement epochs, but demonstrated poor instruction selectivity compared to the neurons presented in a.

c, Hand-instruction-related modulation for three simultaneously recorded neurons. MN was cued to open and close his hand by text instruction, presented on the screen. An increase in these neurons' firing rates is directly indicative of the intention to close the hand.

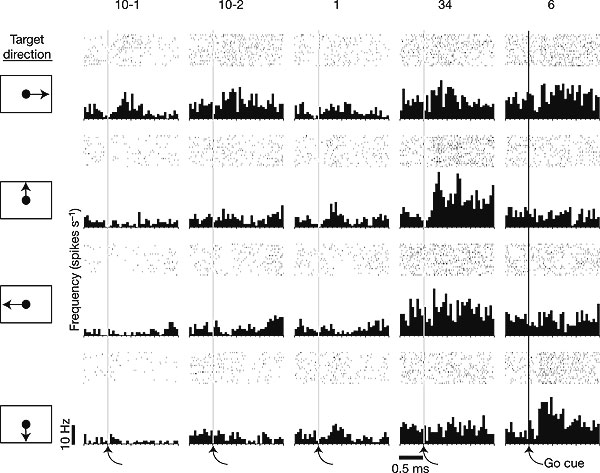

spike rates for five neurons recorded simultaneously during the performance of a four-direction centre-out task (day 90) in which MN used the neural cursor to acquire a target presented at the right, top, left, or bottom of the screen (n=20 trials per direction) Increases in activity after the go cue demonstrate movement-intention-related modulation. Each column shows the firing of one unit in the four directions, aligned on the cue to move. Changes in firing across the five neurons reveal directional tuning.

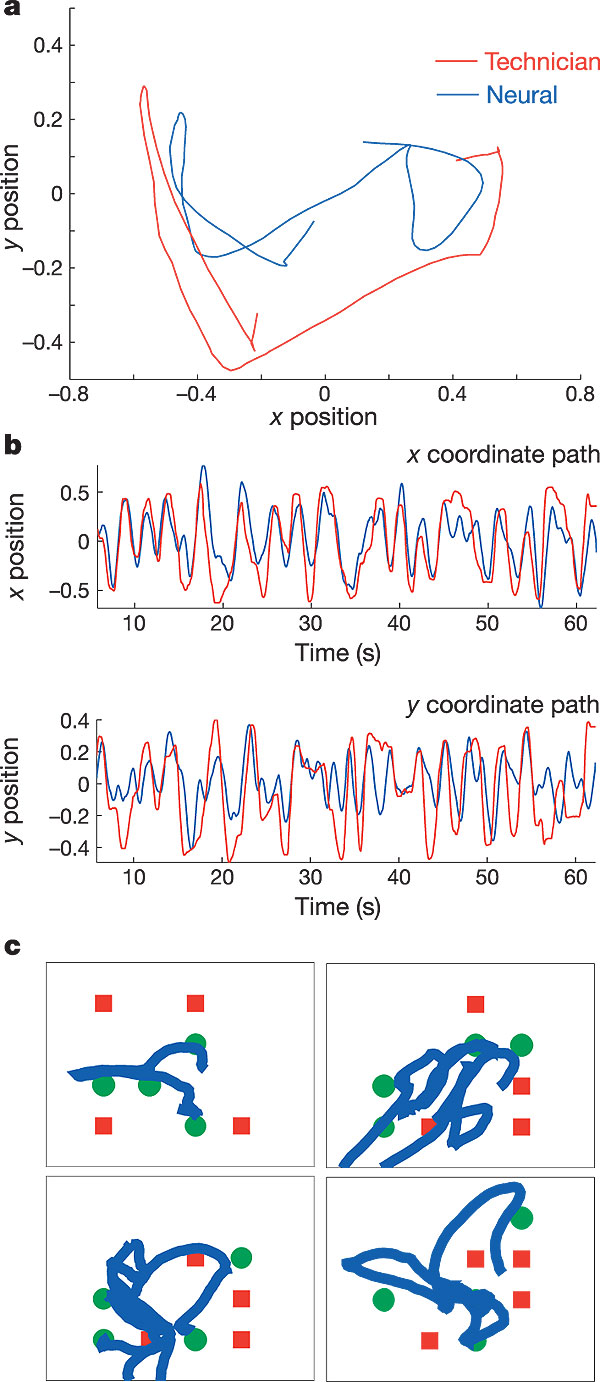

a, MN was asked to track the technician cursor with his neural cursor in real time. MN was able to track the general direction of the technician cursor with the neural cursor, changing directions quickly, while having some difficulty in overlaying the cursors precisely. Trial day 90. b, x, y position control over time during one tracking trial (last 1-min epoch of filter building). The top panel displays the x coordinate position of the technician cursor (red) and the neural cursor (blue); y positions for the same movement are shown in the bottom panel. c, Neural cursor position during a target acquisition/obstacle avoidance task. The four panels represent the four epochs shown in Supplementary Video 5. Green circles indicate targets; red squares indicate obstacles. The thick blue line indicates the path taken by the neural cursor and illustrates the ability to avoid most obstacles and acquire most targets within a randomly arranged field. Data are from trial day 90.

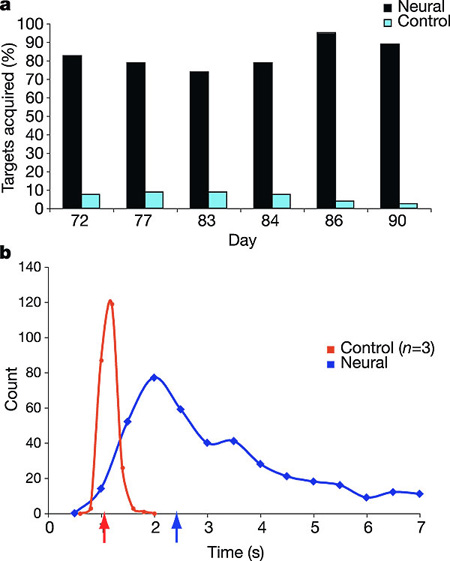

a, Target acquisition accuracy during the centre-out task. For each of six sessions, MN acquired between 73–95% of the radially placed targets. Control targets were not present on the monitor during task performance, but were marked as acquired if, during post-hoc analysis of the cursor movement, the cursor had traversed the location of one of the other three pseudo-randomly selected targets before the correct target (see Supplementary Video 1). Data from days 72, 77, 83, 84, 86, 90 are shown. b, Time-to-target performance during centre-out task for MN (blue) and three able-bodied controls (red). Only successful target acquisitions in <7 s are shown for MN. Arrows on the abscissa represent median times to target for each distribution. Controls' performances (n = 3 controls, 80 trials each) are collapsed into 0.2-s bins. MN's performance (398 trials) is collapsed into 0.5-s bins for visual clarity.

- Results

a)As shown in figure 1, the subject fulfilled the first requirement for a neural control signal, that is, he exhibited voluntary control of movement and limb-specific activity in M1 neurons. This occurred after three years without movement or kinaesthetic feedback.

b)Neural activity in M1 cells showed sufficient limb and movement specificity for the construction of a control signal via a linear filter.

c)A control signal was constructed merely from the subject´s imagined tracking of a technician´s cursor onscreen, via imagined hand movements.

d)Even before training, this control signal was sufficient to enable fairly good control of vertical and horizontal movement in a displayed neural cursor, as shown in a ¨center-out¨ task

e)Further training enabled the subject to perform fine tuned voluntary movements of a cursor. This was sufficient to track a technician´s cursor, as well as open a simulated email account, control a television and draw a crude circle in a simulated ¨paint¨ program.

f)In addition, the subject was able to control a prosthetic limb and a robotic arm, without further training, and in the absence of the familiar cursor interface.

- Discussion/Conclusion

a) This experiment demonstrates that the basic requirements for a NMP can be met in a human subject, with minimal side effects and without interfering with normal function (the subject was able to converse while performing tasks).

b) A relatively simple filter construction can yield a stable and easily fine-tuned control signal, sufficient for control of a cursor in basic daily activities, a well as basic moving and grasping motions of a prosthetic limb or robotic arm.

c) Primary obstacles to implementing this technology widely lie in adapting the interface to a wider range of movements, increasing the usable duration of the implant (in both the primary and secondary subject -not mentioned here-, over 60% of recorded units were lost by 6 months. The reasons for this are being investigated), and making the interface practical and mobile (the present interface consists of a large, bulky cart of recording and computer equipment, which requires the presence of a skilled technician).

- Additional links and supplementary information:

Current commercial applications:

Honda develops a brain-machine interface (as seen in my presentation) [1]

MANUSCRIPT ID

- Title

Learning to Control a Brain–Machine Interface for Reaching and Grasping by Primates

- Reference

Carmena JM, Lebedev Ma, Crist RE, et al. Learning to control a brain-machine interface for reaching and grasping by primates. PLoS biology. 2003;1(2):E42. Available at: http://www.ncbi.nlm.nih.gov/pubmed/14624244.

- Abstract

Reaching and grasping in primates depend on the coordination of neural activity in large frontoparietal ensembles. Here we demonstrate that primates can learn to reach and grasp virtual objects by controlling a robot arm through a closed-loop brain-machine interface (BMIc) that uses multiple mathematical models to extract several motor parameters (i.e., hand position, velocity, gripping force, and the EMGs of multiple arm muscles) from the electrical activity of frontoparietal neuronal ensembles. As single neurons typically contribute to the encoding of several motor parameters, we observed that high BMIc accuracy required recording from large neuronal ensembles. Continuous BMIc operation by monkeys led to significant improvements in both model predictions and behavioral performance. Using visual feedback, monkeys succeeded in producing robot reach-and-grasp movements even when their arms did not move. Learning to operate the BMIc was paralleled by functional reorganization in multiple cortical areas, suggesting that the dynamic properties of the BMIc were incorporated into motor and sensory cortical representations.

- Keywords

BMI, Brain-Machine Interface, Closed Loop, Directional Tuning, Primary Motor Cortex, Macaque, Linear Model, Robotics, Artificial Intelligence

- Input Author

JJM

MANUSCRIPT DETAILS

- Introduction/Aims

Spinal cord injuries and neurodegeneration lead to thousands of motor deficits every year. Typically, research is aimed at reconstructing connectivity and function of damaged areas (Schwab 2002). This paper aims to bypass the damaged area entirely by recording from motor control areas and using those signals to control an external robotic prosthesis.

Primary motor cortex and surrounding areas probably control intentional motor movements, but there are many open questions regarding brain-machine interfaces recording from these areas (Taylor et al. 2002, Pesaran et al. 2002). These questions include:

*What type of brain signal is the best input? Single unit? Multiunit? Local Field Potentials?

*Which cortical brain region(s) should we record from and contain the most information about motor movements?

*What types of motor commands can be extracted from cortical activity?

*How does an artifiicial actuator like a robot arm affect performance?

*How does use of a BMI reorganize cortical functionality?

This paper aims to explore these questions.

- Methods

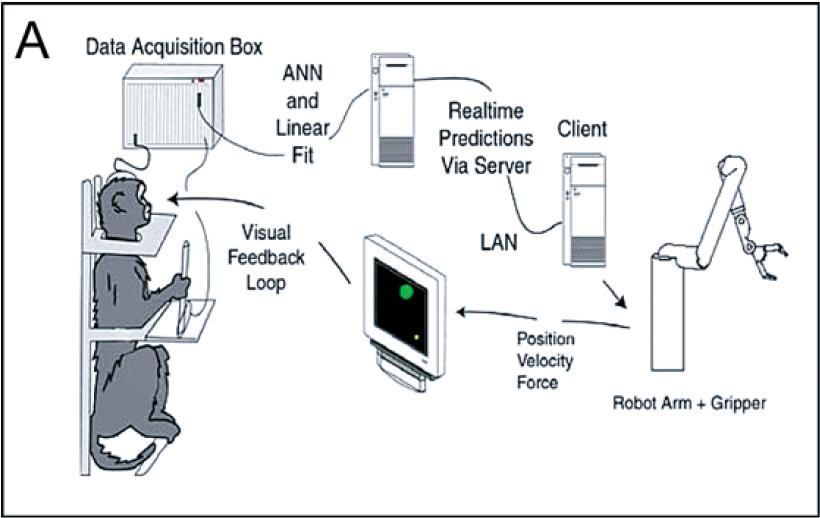

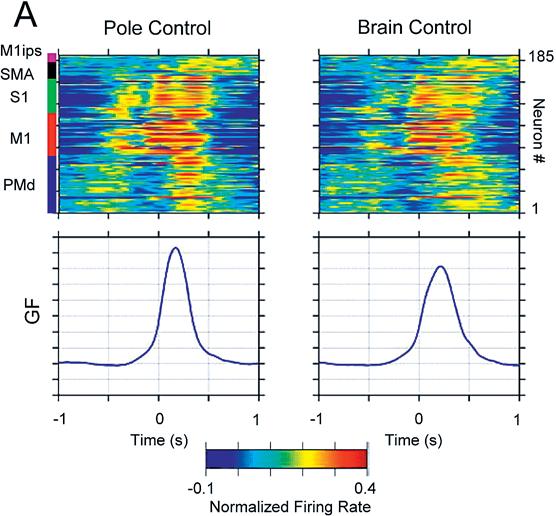

The subjects in this study were two adult female macaque monkeys. Each monkey had training in interacting with a computer while in a chair. Recording arrays were implanted in primary motor cortex, dorsal premotor cortex, and supplementary motor cortex. Monkey 1 also had a recording array in primary sensory cortex. Monkey 2 also had a recording array in the medial intraparietal area of posterior parietal cortex.

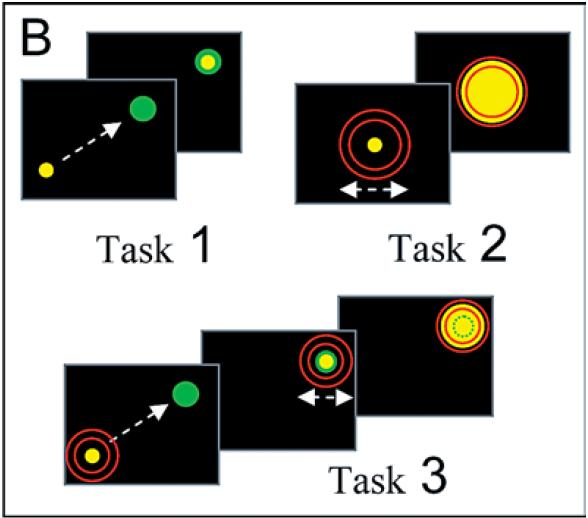

The experimental set-up was as follows. The monkeys were seated in a constrained chair facing a computer screen. In some trials the monkeys controlled a cursor on the screen by moving and squeezing a pole with their left arm. In other trials the pole was absent. Multiunit recording data from the abovementioned cortical areas was passed into a data acquisition box. A linear model was trained on the neural recording data to predict the position and squeeze strength of the cursor. In pole-control mode, the monkey directly controlled a robot arm; in brain-control mode, the linear model controlled the robot arm. The cursor's position on the screen was a reflection of the robot arm's position.

In task 1, the monkey simply had to move the cursor to a target on the screen, and was given a juice reward upon successful completion in less than 5 seconds.

In task 2, the monkey had to apply the correct amount of force to expand the cursor to the proper size.

Task 3 was a combination of task 1 and 2. The monkey had to move the cursor to the target and then apply the correct pressure.

The linear model was a simple linear regression model, which finds the matrix of weights A that minimizes the mean squared error of the actual training outputs Y vs. X*A. Y is the output matrix over time, and X is the input matrix over time. A was found by the equation A = inv(X'X)X'Y, where inv(X) represents the inverse operation on a matrix, and X' represents the transpose operation on a matrix. The first 10 minutes of pole control activity was used as training, then the values of A were frozen in place for prediction.

- Results

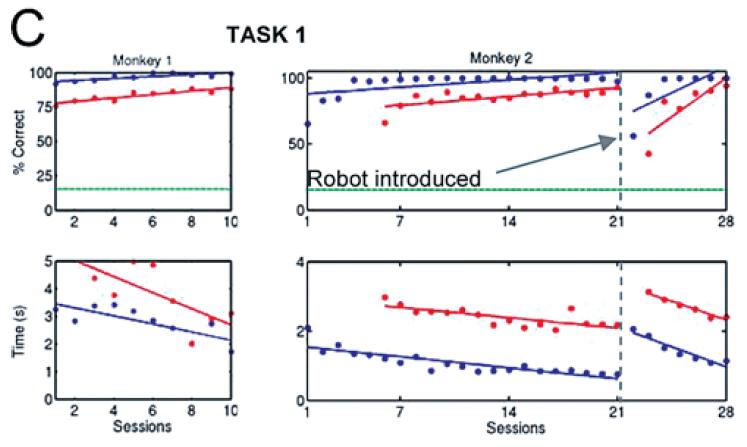

Monkeys can learn the tasks in both pole control and brain control. Trials correct and average time to complete trials both improved in both pole-control and brain-control set-ups. The model's prediction of the three parameters was very stable over time.

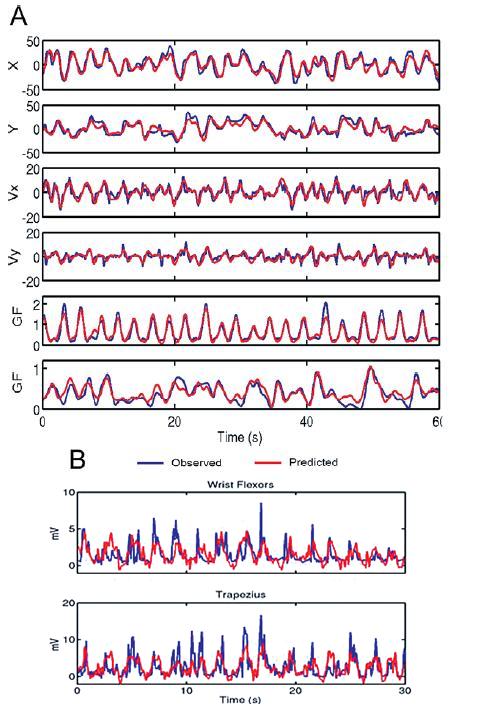

The linear model predicts hand position, velocity, and gripping force very accurately. (Figure 2A-B) Actual muscle contractions were not predicted so well. Each area recorded from contributed some information about all 3 parameters to differing degrees. Primary motor cortex seemed to contain the most information about all parameters. Single-unit recordings contain more information than multi-unit recordings, but that deficit can be overcome by recording from more channels.

With training, information extracted from brain regions increases. Before training, M1 had the best predictive power, with a correlation value of ~0.4, where other areas were around 0.25-0.3. After training, all areas had a correlation value of close to 0.6.

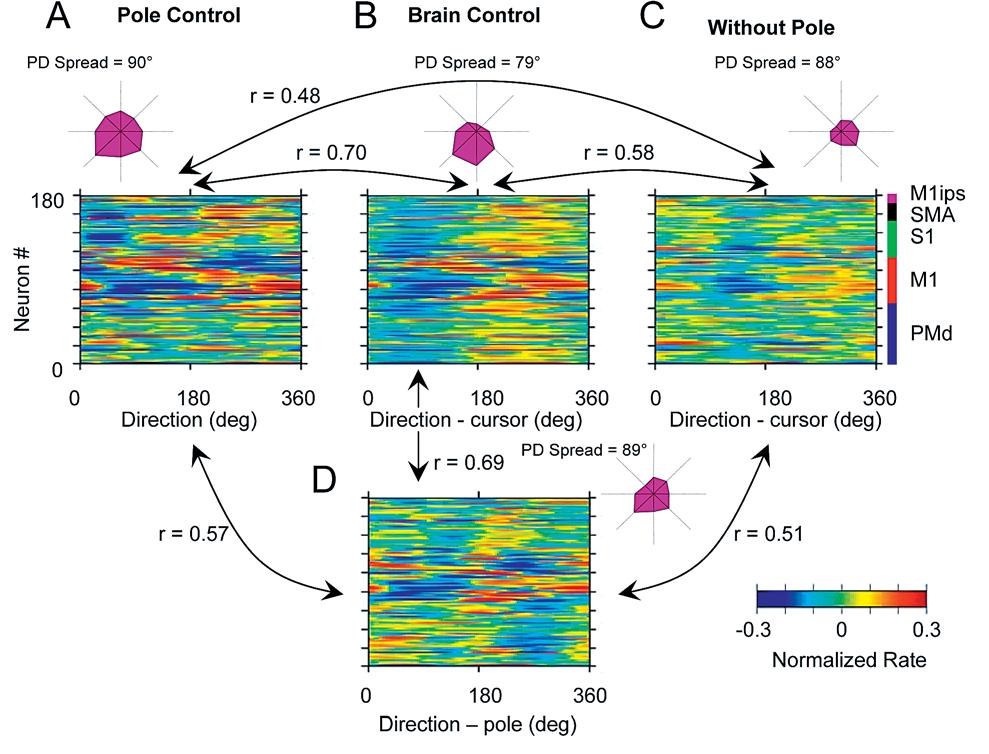

Figure 4A-D: In pole control, the entire neural ensemble is responsive to all angles; in brain control, the response is greatest to a narrower range of angles. In direct pole control, the neuronal ensemble as a whole was evenly spread out over all directions. In brain control, the ensemble is more biased to respond to one particular direction. r values indicate how correlated one plot is to another. This shows that the neurons are changing their responsive properties in different situations.

Individual neurons have a higher tuning depth in direct pole control. Tuning depth is the maximum response minus the minimum response; it is a measure of selectivity. In general, there was more tuning depth during pole control than any other condition. However, some neurons exhibited more selectivity in pole control, while others were more selective during brain control.

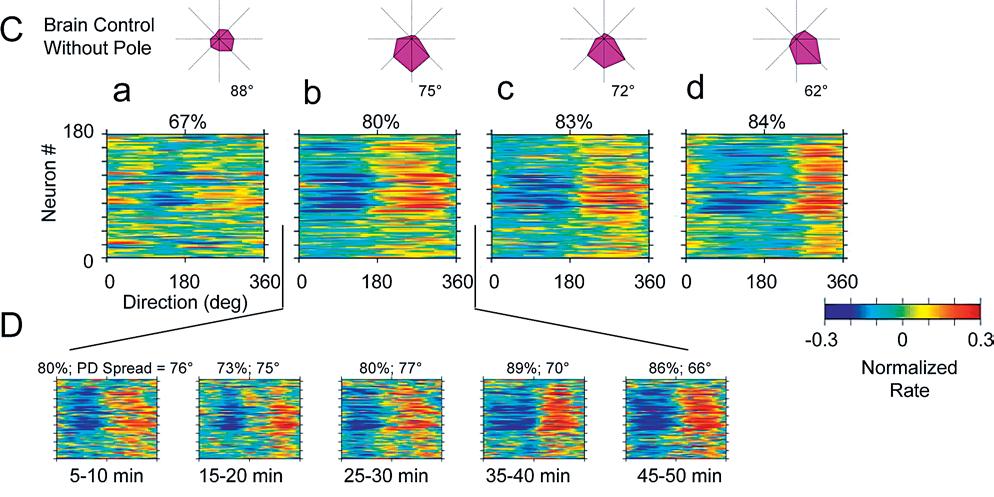

Directional tuning of an ensemble in pole control narrows and rotates slightly over training, while maintaining a high tuning depth for individual neurons. In early sessions, the ensemble's responses were scattered over all angles. In later sessions the neurons responded more synchronously to a particular direction. (Figure 5C-D)

Neural firing for gripping force is very similar in both pole control and brain control. (Figure 6A) Here, M1 consistently has the most predictive correlation with gripping force, followed by S1. Both of these improved over training, while neurons from other areas did not exhibit more predictive power.

Position and force trajectories are very similar in pole control and brain control, and in both monkeys gripping force was the best predicted followed by position, and then velocity.

- Summary

-Monkeys can learn to operate a robotic arm without overt self-arm movements.

-Large ensembles of neurons are preferred.

-Accurate predictions can be made with multiunit recordings, as opposed to single-unit recordings.

-Motor programming and execution are highly distributed in frontal and parietal areas.

-Neurons change their receptive fields with training, indicating that preexisting networks can assimilate a representation of the robotic arm and possibly lead to a sense of "ownership."

- Discussion

There is considerable debate about what exactly the motor cortex encodes in primates, whether neurons there are directly controlling muscles, encoding movement intentions, or just responding to movement of the body. (Todorov 2000, Georgopoulos et al. 1989, Johnson et al. 2001)

This paper shows that it is very difficult to separate the effects of the motor cortex from the surrounding ares. All recorded areas contained information about arm movements. The authors claim that with enough data, and area could control a brain-machine interface; primary motor cortex could be avoided entirely. While this is good news for patients with damage to motor cortex, it complicates the discussion about the exact role of motor cortex. It is clear, however, that the motor cortex operates in tandem with the surrounding areas in order to produce movements.

The other major claim of this paper is that neurons in these motor control areas can vary significantly in their receptive fields, and these fields can change with training. Some neurons lose their tuning depth when arm movements are absent, but others become more tuned when there is no arm movement. Some lost their tuning when the monkey was still moving its arm, but the brain was controlling the cursor. The tuning directions and depths of the neurons changed over time, showing that motor cortex can be modified with training. This implies that the homunculus in the motor cortex is probably not static, but use- and experience-dependent. This is a good sign for patients hoping to control a robot arm in the future, in that with time the foreign arm may eventually be incorporated into the person's image of them self; the arm would become part of the body.

The method of using the linear model to interpret the multiunit recording signals actually does not tell us much about the properties of those neurons. The model is simply a large matrix of weights, which for all intensive purposes is a black box. So we have replaced the black box of the brain with another of our construction. However, the fact that these recordings can be used for artificial limb control is still a positive thing. The fact that monkeys can learn how to control robotic arms with a fairly high degree of sophistication[2] is encouraging for patients seeking artificial limb replacements. These results indicate that a large degree of functionality will be able to be given back to amputees in the near future.

- References

Carmena JM, Lebedev Ma, Crist RE, et al. Learning to control a brain-machine interface for reaching and grasping by primates. PLoS biology. 2003;1(2):E42.

Georgopoulos AP, Lurito JT, Petrides M, Schwartz AB, Massey JT (1989) Mental rotation of the neuronal population vector. Science 243: 234–236.

Johnson MTV, Mason CR, Ebner TJ (2001) Central processes for the multiparametric control of arm movements in primates. Curr Opin Neurobiol 11: 684–688.

Pesaran B, Pezaris JS, Sahani M, Mitra PP, Andersen RA (2002) Temporal structure in neuronal activity during working memory in macaque parietal cortex. Nat Neurosci 5: 805–811.

Schwab ME (2002) Repairing the injured spinal cord. Science 295: 1029–1031.

Todorov E (2000) Direct cortical control of muscle activation in voluntary arm movements: A model. Nat Neurosci 3: 391–398.

Taylor DM, Tillery SI, Schwartz AB (2002) Direct cortical control of 3D neuroprosthetic devices. Science 296: 1829–1832.